>>> The follow-up to this article can be read here

Well, just as I've spent the last 6 months or more telling people that GitHub Copilot is actually a good product, and excellent value for money... They go and drop this bombshell...

GitHub Copilot

This was the plucky little scruffy mutt that was keen and eager of the AI Model world. But I feel always had a bad rep because of its association with Microsoft in a largely anti-Microsoft, Mac hugging, Linux loving development user base. Everybody is trying to make the next million dollar viral app from their macbook in a coffeeshop on a Zoom meeting with their Google Workspace suite, and a thousand other point solutions with individual subscriptions to Asana, Notion, and countless more getting out of control... Sorry, carried away slightly, I digress...

So what's Copilot?

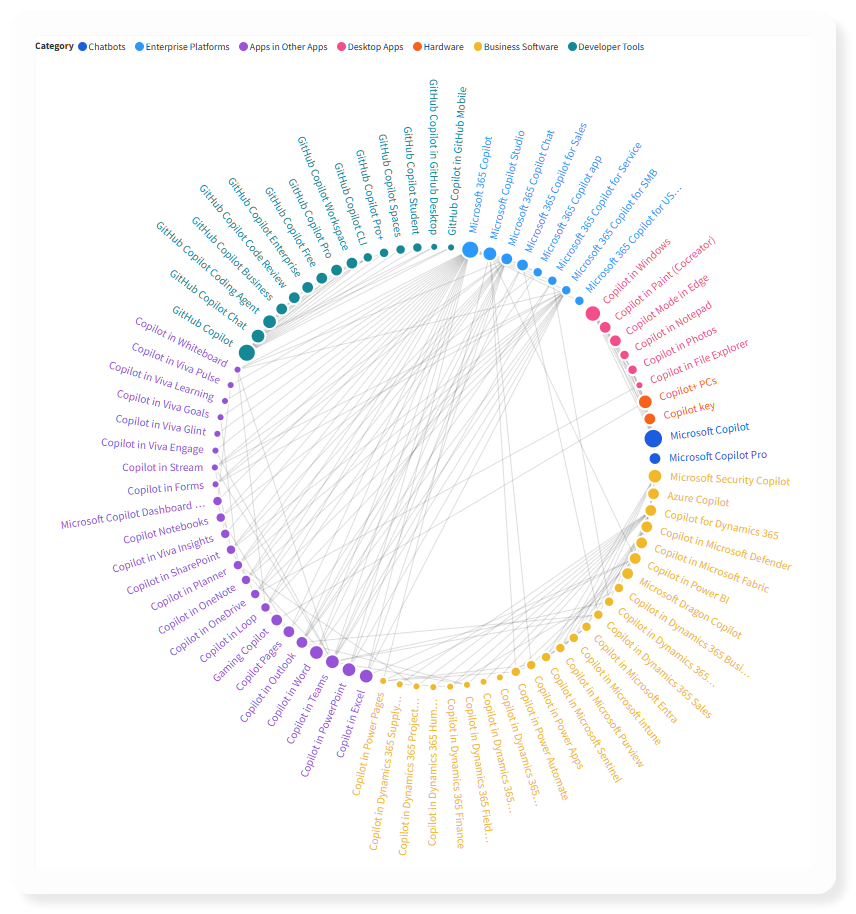

Not helped by the fact that Microsoft went (even more) crazy and labeled everything 'Copilot' to the point where nobody even knows what they've got or what they're paying for... I'm not joking, Tey Bannerman took on the pain so we don't have to and mapped out 70+ different products, SKUs, and licences that include the word Copilot from Microsoft.

I've not seen it this bad since the days of DirectX, ActiveX, erm. XBox.. shh, that still counts.

Click the image below to visit their site and play with their interactive diagram as you shake your head in bewilderment at how a marketing department could have so much power and get it so wrong. Unless confusion shows a direct relation to increased licence sales? Is it genius in disguise? They sure aren't doing it to be liked.

Back on topic

However, you'd be surprised how little Microsoft influence is under the hood of GitHub Copilot when it comes down to it.

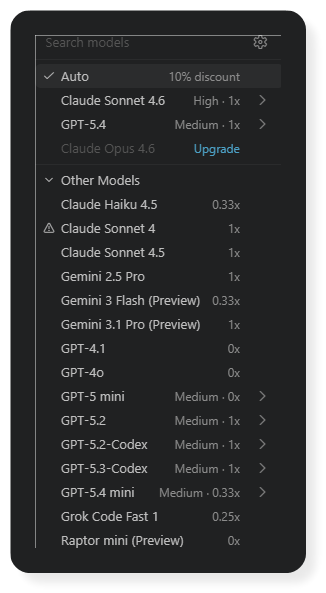

What GitHub Copilot actually gives you is a multi-vendor selection of all the latest models, and some additional 2nd tier models to boot. Not some half thought out lazy agent forced upon Windows users like the spiritual successor to Cortana.

GitHub Copilot previously had 3 plans. Free, Pro at $10 per month, and Pro+ at $39 per month.

With very few exceptions, the Pro and Pro+ gave you access to all of these...

| Provider | Model | Versions |

|---|---|---|

| OpenAI | GPT | 4.1, 5-mini, 5.2, 5.2-codex, 5.3-codex, 5.4, 5.4-mini |

| Anthropic | Claude | Haiku 4.5, Sonnet 4 / 4.5 / 4.6, Opus 4.5 / 4.6 / 4.6-Fast / 4.7 |

| Gemini | 2.5 Pro, 3 Flash, 3.1 Pro | |

| xAI | Grok | Grok Code Fast 1 |

| Microsoft | Raptor Mini | Fine-tuned GPT-5 |

| Microsoft | Goldeneye | Fine-tuned GPT-5.1-Codex |

Usage and Limits

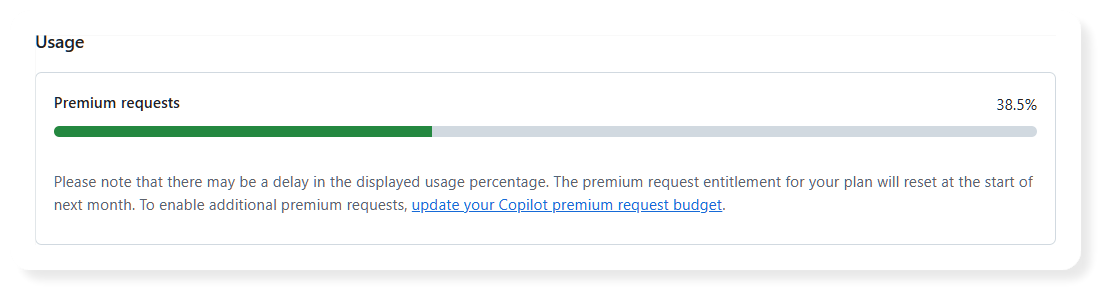

With GitHub Copilot they have a concept of 'requests' (not tokens or messages as such), with an allocation of 300 (Pro) or 1500 (Pro+) 'premium requests' per month. There is none of this silly 5-hour or weekly limits that you see with Anthropic or OpenAI directly. You can purchase extra usage if you go over for the month. Very simple.

Smaller / more efficient models such as Haiku are charged at 0.33x, whereas Opus is charged at 3x or 7.5x depending on the version (and the offer period they were running with Opus 4.7 until the end of April).

Models like Raptor Mini, GPT-4o, GPT 4.1, and GPT 5-mini, are effectively unlimited being counted with a zero multiplier (i.e., they don't consume premium requests when used).

Others like Haiku and Gemini 3 Flash, and GPT 5.4 Mini charged at 0.33x

So what's the catch? That's a lot of models, and a lot of usage? Even on the cheaper 'Pro' plan for $10 per month, not to be sniffed at... right?

Size isn't Everything (but it helps)

I'm talking of context length, obviously.

The one notable difference between getting these models from the vendor, and using them via GitHub Copilot is where they are hosted, and the support context length.

All of the OpenAI models, and Microsoft's fine-tuned versions are hosted in GitHub's Azure Infrastructure (using Microsoft's partnership with OpenAI, effectively meaning that Microsoft are hosting these models, not the vendor).

Anthropic 's models are hosted by a mix of Bedrock (Amazon Web Services), Anthropic themselves, and Google Cloud Platform.

Google's and xAI models are hosted on the providers infrastructure.

Although not published (anywhere that I could see anyway), here's the difference in context length.

Claude models all seem limited to 200k tokens, with compaction happening somewhere around 80% of that ~160k tokens. Compared with up to 1M tokens directly from Anthropic.

GPT models have a larger (yet still limited) context of 400k tokens. Better, but still not the same as when compared to 1M tokens directly from OpenAI.

So small is useless then?

Nah, not at all champ, keep your pecker up... It's what you do with it that counts. Here's a few tips to get the most out of your size... just the tips.

- Concise and direct AGENTS.md (or CLAUDE.md / etc) file (split up larger files into separate documents, and link to them so the agent can choose to follow them depending on your prompt).

- Don't overload the agent with loads of MCP Servers and Tools (their definitions all take up context space). If possible use Skills, they work in a similar way to the nested AGENTS.md file, skills include the name and description, and if the agent decides it needs to use a particular skill, it will fetch the full schema and payload details into its context as it goes.

- Use sub-agents or delegate tasks to other agents. This allows each task to be broken up into a specific prompt, providing only the information needed to carry it out. When it's done with it, it hands back the results to the main agent.

It had Stamina to go all night

Until recently, yet another self-sabotage introduced to stop people having fun.

It used to be a simple per monthly limit on the 'premium requests'. When you ran out you could wait until the plan renews next month, or you could buy extra.

In the article released today, it mentions that they also introduced a session and weekly limit very recently, to combat the kill-joy party-pooper AI tech bros using OpenClaw.

We introduced weekly limits recently to control for parallelized, long-trajectory requests that often run for extended periods of time and result in prohibitively high costs.

I don't see that limit in my subscription yet, perhaps it was for new sign-ups, or I'll see it rolled out soon.

Where can I use GitHub Copilot

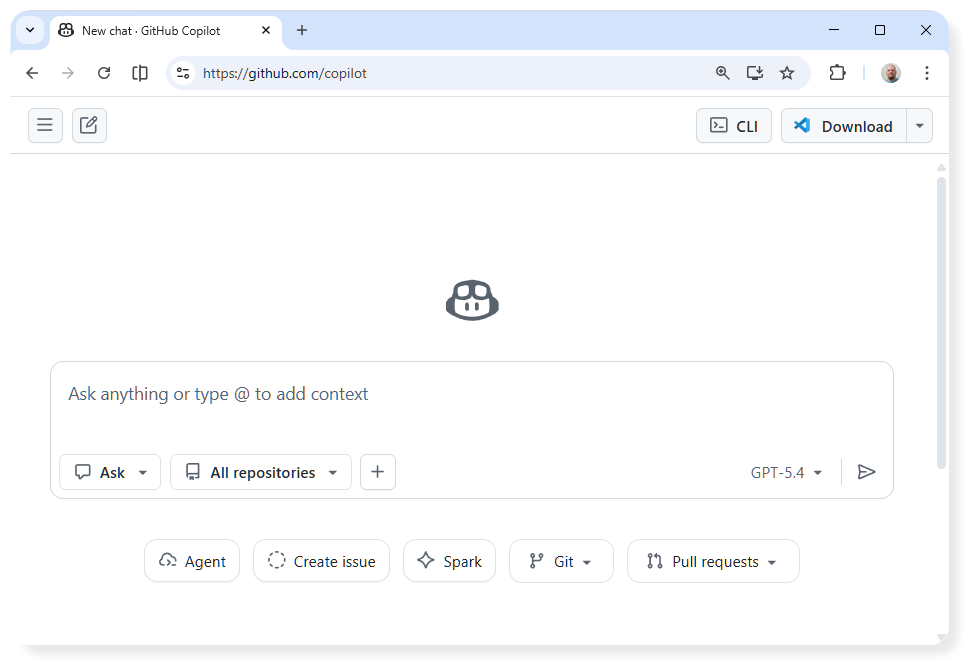

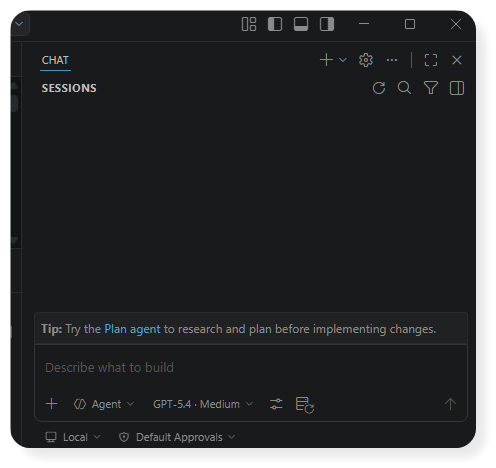

GitHub Copilot is available in a browser and works just like Claude Code on the web, but it's automatically linked to your GitHub account so it can be given access to your repos, just by selecting them. And then it'll whurr away in the cloud doing your bidding.

It's also available as an IDE plugin, for VS Code, Visual Studio, JetBrains, Eclipse, Xcode.. And probably more unofficial plugins for other IDEs.

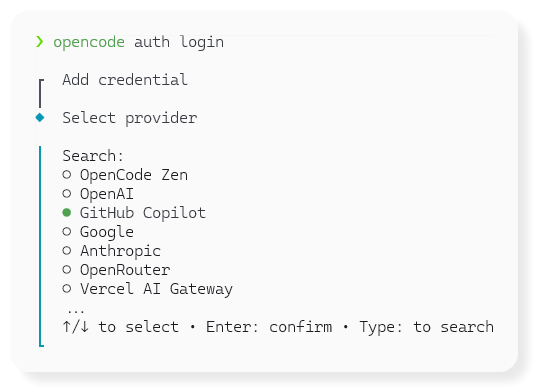

It's also available as a CLI/TUI application. Use npm install @github/copilot to install.

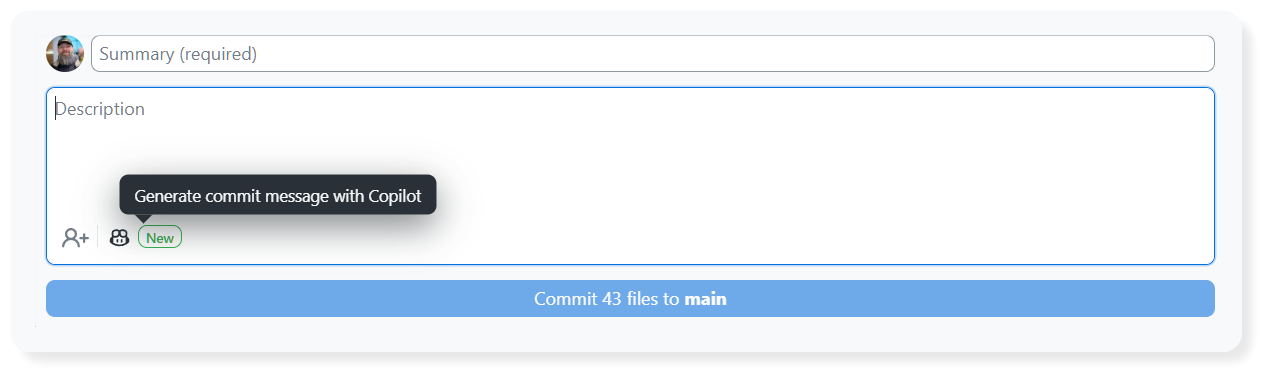

Also bundled into GitHub Desktop helping you create commits / PRs by reading the code changed in the files.

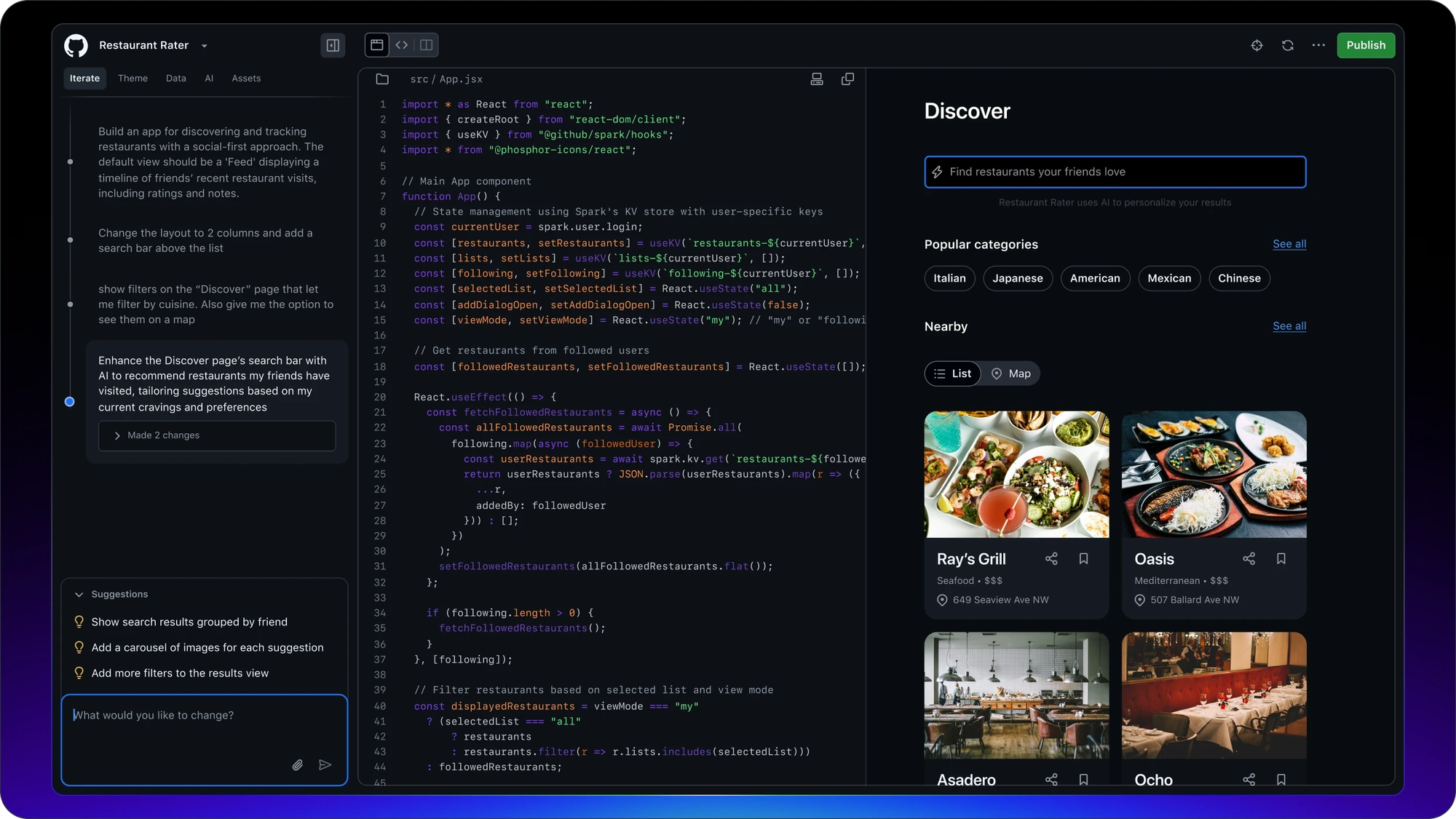

And if you're a Pro+ (or whatever abomination comes in to replace it), you also get access to GitHub Spark, their attempt to let you 'one-shot' the app of your dreams. Seems like it's aimed at people that can use a command line tool, nor can they handle VSCode, but I've never tried it so I could be way off the mark.

And my personal favourite (more in-depth post coming soon)... OpenCode!

Yesterday's Conclusion

Would I recommend GitHub Copilot? Yes, absolutely. For $39 a month, it provided a broad set of OpenAI, Anthropic, Google (yeah, ok.. and xAI's Grok).

Getting GPT 5.4 with 400k context at 1x multiplier, and 5.4-mini at 0.3x. As well as Sonnet/Opus/Haiku even at 200k context.

It used to be the best deal out there that I found.

Today's Conclusion

Then they decided to drop Opus from the Pro plan, only available in the Pro+ - but still at $39 a month, 4 vendors, ~16 models. It's pretty good.

But I'm going to stop putting my neck on the line each time I say "GitHub Copilot... nah, not that Copilot... that's different.. yeah Microsoft Copilot has been pretty shit. I know.. I know. this is different!".

I'll wait to see what else they butcher in the plans, and what price vs premium request they land at.

Until then, OpenAI ChatGPT Plus, or Claude Pro is the next best alternative at that price point until you get serious with the GPT Pro or Claude Max plans.